Twitter + Geosocial intelligence: Interview about PetaJakarta.org with Dr. Tomas Holderness

Twitter has already proved to be a great tool for almost everything from making awesome data visualizations to cool maps to (well almost) Location based ads, perhaps the most celebrated of them all, has been it’s the ability to rally the power of netizens for social activism.

Can Twitter be used during Disaster Management? More specifically, Can Twitter serve as a valuable tool during Flooding? We interviewed Dr. Tomas Holderness, the co-Chief investigator of PetaJakarta.org to learn more. (Short bio at the end of the page).

Could you tell us about the PetaJakarta.org project for our Geoawesomeness readers?

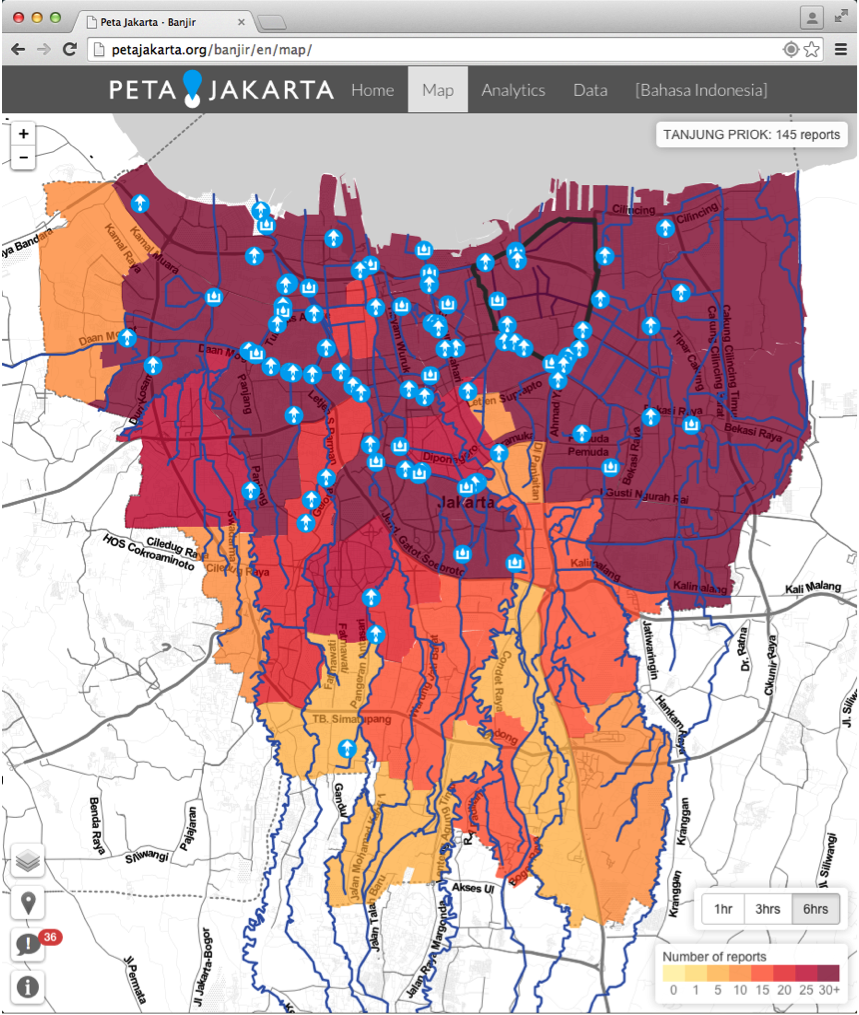

PetaJakarta.org (Map Jakarta) is a real-time map of flooding in Jakarta created using tweets from members of the public. The really cool thing about the project is that during floods we automatically reach out to users on the Twitter platform, asking them to confirm the flood situation at their location. We’re very grateful to Twitter for them letting us do this, as far as I know it’s not something that’s been tried before. To submit a report a user simply has to send us a geolocated tweet with the keyword “banjir” (flood) and their report is added to the map. We’re working directly with the Jakarta Emergency Management Agency (BPBD DKI) and Twitter to think about new ways of using social media to gather information during extreme weather events – the term we use for this is ‘geosocial intelligence’.

Twitter Inc. visits the Emergency Management Agency (BPBD DKI) in Jakarta to see the PetaJakarta.org system live in the control room. Image courtesy of Tomas Holderness.

How did it all start?

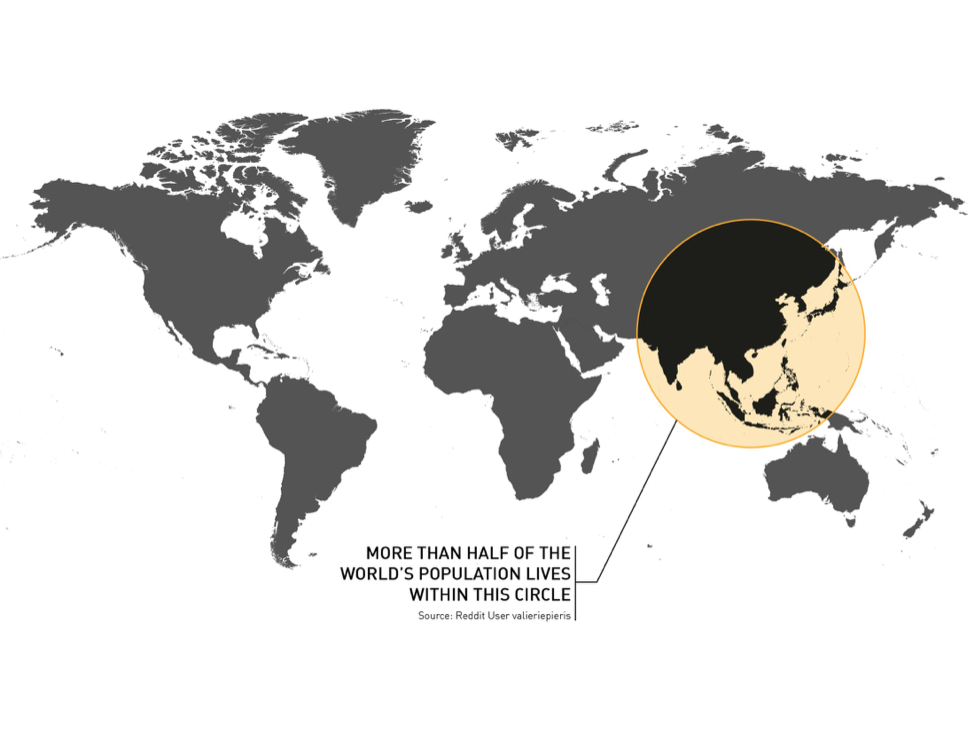

PetaJakarta.org started as a conversation between Dr Etienne Turpin co-Chief Investigator of PetaJakarta.org and myself. We were trying to work out how better to collect geospatial data about flooding in Jakarta. Etienne mentioned that Indonesian’s are really passionate about their social media and Jakarta has one of the highest numbers of Twitter users world wide. There’s almost no real-time geospatial data about flooding but everyone’s tweeting about it, so we thought we could try asking the public to tell us what the situation was using social media. In return we made the map publically available as a tool that citizens can use to see the situation, and the same data is used by the government of Jakarta as a real time indicator of flood activity.

As a researcher, what was the most compelling thing about this project that made you so interested in it?

We’re interested in the resilience of Southeast Asian megacities to extreme weather events – so we hope that PetaJakarta.org will provide us with a detailed source of data to better understand flooding in Jakarta. One of the really interesting things we’ve seen is the potential for social media to enable a process of civic co-management – that is citizens and government communicating and sharing data to improve flood response.

Twitter is an interesting platform for collecting information. What does the usual tweet contain besides the specific hashtag and GPS coordinates? Would it be possible to include other data?

Well a lot of people send us photos of the flooding. These are a very insightful source of information about the situation on the ground, and were used by BPBD this year to verify flood reports from other sources. Images aren’t actually included in the tweets, they’re represented in the text as a link, along with other metadata such as time, username and user device. Even though the data is made publically available by the user, when we store a tweet at PetaJakarta.org we first anonymize it, and remove the user’s metadata. It’s important for our users to know that we only keep the parts of the data we need for the map.

Jakarta has one of the highest number of Twitter users in the world, PetaJakarta.org is harnessing this communication system to collect and disseminate information about flooding in near-real time. Photo courtesy of Ariel Shepherd.

Did you find any interesting trends/patterns from the tweets?

We’re still crunching the data (the flooding season ended in March this year), but there are definite spatio-temporal patterns in the data that correspond to the locations of flooding. The plan is to publish a White Paper about the project in the coming months, which will be available to anyone, and will include a description of what we found.

One of the most exciting things we saw was a lot of tweets containing reports of flood heights. I think there’s definitely scope to expand the research to see if we can make use of these. The plan is to work with National Geographic Indonesia to help train some of the “geo community” in Jakarta to use a different hashtag to report estimates of water height.

Screenshot from the system in action during flooding in January 2015. Image courtesy of PetaJakarta.org

Are there plans to expand to other social media, maybe even SMS based updates?

Not new social media platforms per say, however a number of media organizations in Jakarta have offered to us to collaborate and provide access to data gathered from citizen-journalists, which could be added as another layer on the map. I think this highlights one of the really interesting areas of geospatial in IT at the moment – the prevalence of data APIs offer new sources and combinations of data, which can easily be shared and visualized using web maps.

What was it like to be working in an application as time critical as Emergency Management?

It definitely adds impetus to the project development – from the technological side we did a lot of testing before the rainy season to make sure that PetaJakarta.org could handle a real-time load of tweets and users. We actually wrote our own geosocial intelligence framework called “CogniCity” for handling and serving the data. We created CogniCity from scratch using the NodeJS framework, and we use a PostGIS database for the back-end. This means the application is extremely scalable and we can process around 250 tweets per second. Also, we worked with the emergency management agency (BPBD) right from the start so that we could understand how their systems work and how PetaJakarta.org could compliment their existing technologies.

What were the biggest challenges that you faced during the project?

Perhaps the biggest challenge we faced is explaining to our users (both the public and the government) how the system works and what its limitations are. It’s important to realize that we’re not trying to replace existing data-sources and frameworks for disaster risk management; instead we’re trying to compliment them. There are 28 million people in Jakarta, over 50% of whom have more than one mobile phone – that’s a lot of potential sensors. And in this context it’s important to note that we’re not advocating a big data or a machine learning approach – it’s more of a big crowd technique. We’re asking humans to use the devices in their pockets and the world’s largest communication network (the internet) to report on the urban environment. We were quite successful this year, but there’s still room for improvement – I think this is the real challenge.

It’s great that you guys have an Open Source and Open data policy. How can we help you, be it coding or other means?

Thanks! Yes, taking an open source approach is really at the heart of the project. If people are going to share their data with the government and vice-versa they should be able to see what we’re collecting and how we’re doing it. We’d love to see contributions and ideas from the community but most of all I’d love to see other people grab the software and develop geosocial intelligence for civic co-management in their city, in ways that we haven’t even imagined yet! We built CogniCity from first principles to be transferable and multi-lingual – so it can work for anything people are talking about on Twitter.

It’s interesting that you use the term “Geosocial Intelligence” in your column at IEEE (I used a similar one Geo-social activism back in 2013), do you think we are going to see an explosion in the number of crowd sourced applications like PetaJakarata? Whats your prediction for the future?

I certainly hope so. I think the geoinformatics space is pretty interesting right now, especially in developing nations where there’s been a big focus on making new collections of data open and available. In this context the potential to change information sharing and contributions to governance (e.g. through civic co-management) are huge. I think that as the next billion people in Africa and Asia connect to the internet for the first time, using their smart phone, we should ask ourselves – what will they be using the internet for? And just as importantly, how do we map it?

The region of potential for big-crowd sourcing in 21st century Southeast Asian megacities. Image courtesy PetaJakarta.org

Short bio

Dr Tomas Holderness is Geomatics Research Fellow at the SMART Infrastructure Facility, University of Wollongong, Australia, where he is the co-Chief Investigator of the PetaJakarta.org project. Tomas is a Fellow of the Royal Geographical Society, and has a PhD in Geomatics from Newcastle University, UK.