Maps that move the world – Interview with Ro Gupta, CARMERA #TheNextGeo

Maps move the world – whether it is your last-mile delivery service, your daily commute, commercial shipping services or robotaxis of the future – of all of these require maps. One startup that is working on addressing all of these needs is the Seattle based CARMERA.

CARMERA is a spatial AI company, built on the revolution of vehicular crowdsourcing and remote sensing to capture street-level change, at global breadth and high definition depth. I had the chance to talk to the Ro Gupta, the founder and CEO of the startup to learn more. Enjoy the interview!

Q1: Hi Ro, thanks for taking the time! Before we dive into your work, let us discuss your spatial connection. What prompted you to start an HD mapping startup?

I actually think a lot of it had to do with being born in a dense, chaotic city in a developing country (Kolkata, India) and spending some of my working life (e.g. NGO in Mozambique) in areas of the world where you don’t take road infrastructure for granted. The idea of digitizing and “packetizing” roads towards eradicating the scourges of traffic fatalities, immobility for the underserved, pollution, gridlock, etc. is pretty near and dear to me given some of the physical environments I’ve been exposed to. Losing a family member at an early age to a car crash that would have been prevented with modern automation and mapping technology probably had something to do with it as well subconsciously.

I also lucked into getting exposed to some of the concepts we are productionizing today as a student at Princeton University. This includes the early forms of machine learning, computer vision, neural networks and autonomous mobility system design, all of which I got to tinker with in a dreary engineering building in the late 90s.

Ultimately, CARMERA was founded in 2015, when all of those concepts and more were getting real, and multiple people in the maps and autonomous industry impressed upon me how important next-generation mapping was going to be, and how big of a gap there was in required “temporal density” of street-level data. At its core, it was a question of scalability: How do you do what Google Street View does, but drastically lower the cost so that you are able to get that information in near real-time?

We looked at a couple of applications in real estate and city planning when we started out in the mid part of last decade, but found the most acute need initially in the burgeoning autonomous driving industry. HD maps were then and remain today critical to core AV functions—perception, localization, and path planning, especially in dynamic or complicated environments where things change a lot. We believe HD may converge to an “MD” (medium definition) steady state in the future, but the core problem of change management at scale will always be our north star.

Q2: Could you explain the differences between creating maps for humans versus maps for autonomous vehicles? At a fundamental level, maps are used to answer the same questions: ‘Where am I?’ ‘What is around me?’ Is the difference just in the level of detail and the frequency of data updates, or is there more to it?

Historically, there was a clear difference. “Human maps” were really all about research or navigational information: What are the steps that will take me from point A to point B? The user’s personal knowledge provided all the interpretive context. In contrast, maps for machine-first applications, like HD maps for autonomous vehicles, need to supply the machine with as many priors and semantic insights as possible: How do I make a turn through this intersection; What does this triangular sign mean? What should I look out for if I see a curb cutout?

This distinction between human-centric and machine-centric mapping, however, is starting to blur as consumer applications become more demanding and machine perception stacks become more sophisticated. In fact, as I alluded to, we’ve posited that in the next few years we’re going to see the emergence of a new standard—the “medium definition” map—fusing the most critical elements of HD precision and insight, with the unmatched scalability of SD (standard definition). This will allow humans to leverage map data more like precisely tuned machines do, and machines to make decisions more like an experienced, attentive human would.

One example is a package delivery. Today, the driver would greatly benefit from being guided by a maps app precisely to the right part of the curb that optimizes for safety, dropoff proximity, vacancy probability, etc. Those same inputs, in addition to a bit more vector information informing how best to pull into the curb space, is what an autonomous delivery vehicle needs to perform the same task. Whereas today, we have SD maps serving the human, and HD maps serving the machine, both of those applications would be served by an MD steady state in the future.

Q3: In terms of data updates, most solutions for autonomous driving mention frequently updated maps as a requirement. Your website even mentions “Change-as-a-Service,” could you tell us more about this? How often do these maps need to be updated? Do you need specially-equipped vehicles for it? How does one go about creating living maps?

Change-as-a-Service is a modular offering that layers on top of existing HD and/or SD maps, providing a feed of traffic-impacting events, vector updates and accompanying metadata. This approach is really a response to what we were seeing in the market, via our work with leading automakers, L4 robotaxi companies, and the major mapping incumbents.

Specifically, we saw that the initial problem of base map production had largely been solved—albeit somewhat expensively and with quality varying from provider to provider. The industry, however, still needed a solution for map maintenance—one that both was cost-effective and wouldn’t require a “rip and replace” of customers’ existing mapping stacks.

Our CaaS offering responds to this need, primarily, by crowdsourcing change data via cameras mounted on the vehicles of our commercial delivery fleet partners. (They get a free telematics system in exchange.) This approach is significantly cheaper than traditional LiDAR-based resurveying and offers far greater control than consumer vehicle data alone. Secondly, CaaS is built on a totally format-agnostic pipeline, and data is provided via an open API, ensuring that it can work with whatever hardware or map technology the customer may already have in place.

https://www.youtube.com/watch?v=z_ca5USI10I

Q4: How do you ensure that the map data is accurate, reliable, and consistently up-to-date when the data needs to be refreshed every few hours?

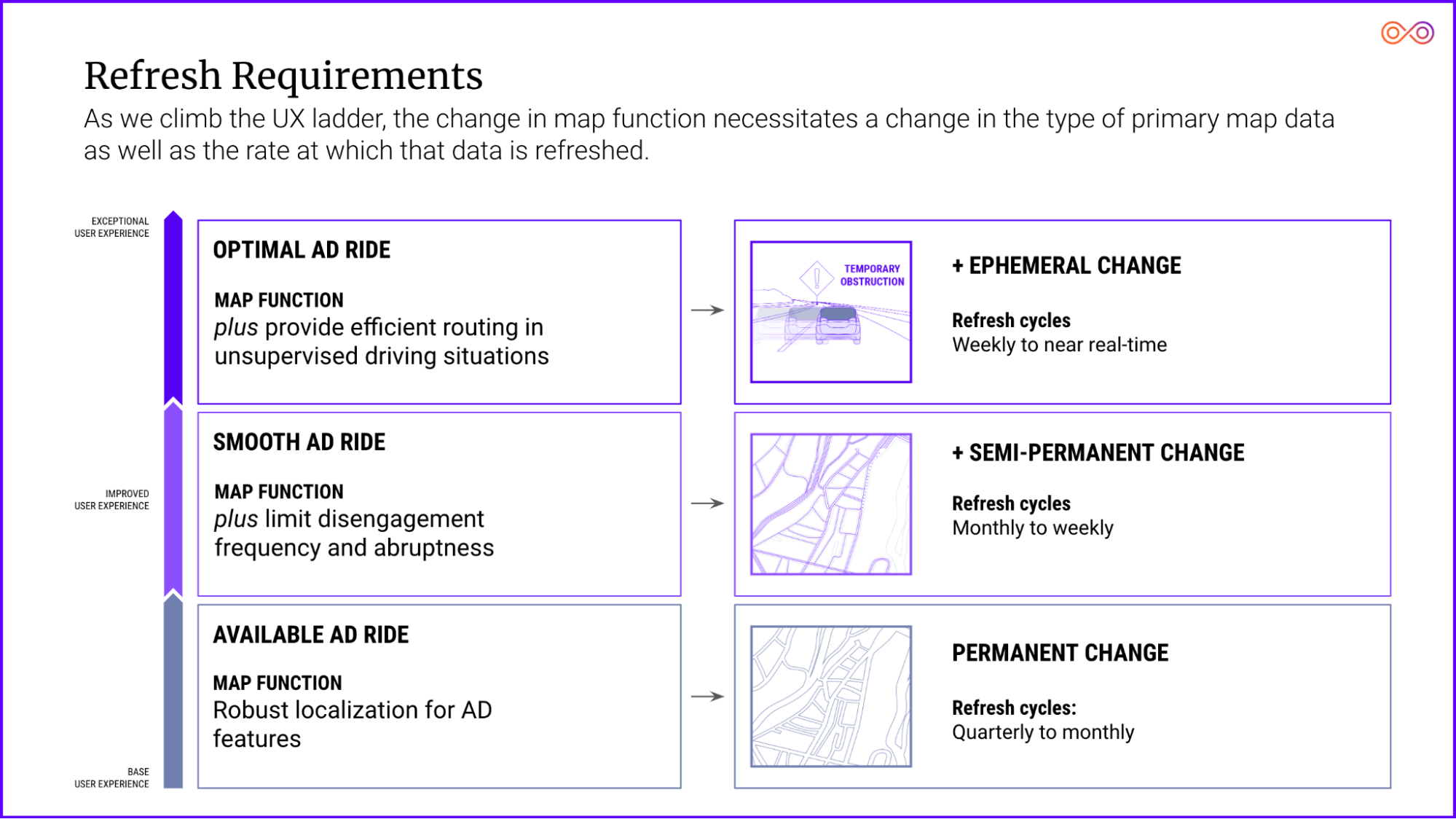

Primarily, the update cadence is really dependent upon the customer’s needs, which, in turn, is usually a function of the type of change and the operational driving domain they’re interested in. Street construction in a very complex city, like New York, for example, needs a much higher refresh rate than something that happens very infrequently, like architectural change on a highway in rural Montana.

We are able to meet customer refresh requirements by managing the portfolio of data sources we’re pulling from. We work with a variety of partners—from major delivery companies to commercial services companies to real estate firms, as well as contracted drivers. Each fleet type has a specific profile in terms of the area they cover and how frequently they drive through that area. For example, a company like FedEx is probably traveling most roads in the commercial core of a major city every day, whereas a commercial catering company like Aramark may be hitting those exact same streets but only twice a week. Blending those fleet types allows us to meet the specific customer coverage requirements— core to our operational IP.

In terms of ensuring accuracy, we control the road video and other data from start to finish. This enables us to perform audits to check for things such as false negatives, an instance where the system incorrectly says no change exists. If you don’t control the video data—as is the case with incumbents—there’s no way to conduct such an audit other than solely by sampling, which is often cumbersome or unreliable.

Q5: The role of maps as a building block for autonomous vehicle navigation makes it a critical safety layer. Do you think compliance with safety standards is inevitable with respect to Safety of Life Applications or do you see another way moving forward?

AV safety standards are still in a nascent stage, although there are some good efforts underway such as ISO 21448 and UL 4600. It remains to be seen what the final frameworks are going to look like, but we are sensing that maps are likely to be viewed as valuable redundancy that may even be taken into account in actuarial modeling and underwriting, especially if they can help address “black box” problems with regard to why an autonomous technology made a certain decision.

Q6: Another aspect of maps that is rarely ever mentioned is the cost factor. In your opinion, are there already competitively priced AV maps fit for use as robotaxis versus regular taxis? If not, how far do you believe we are from that and where do you see the maximum potential for innovation?

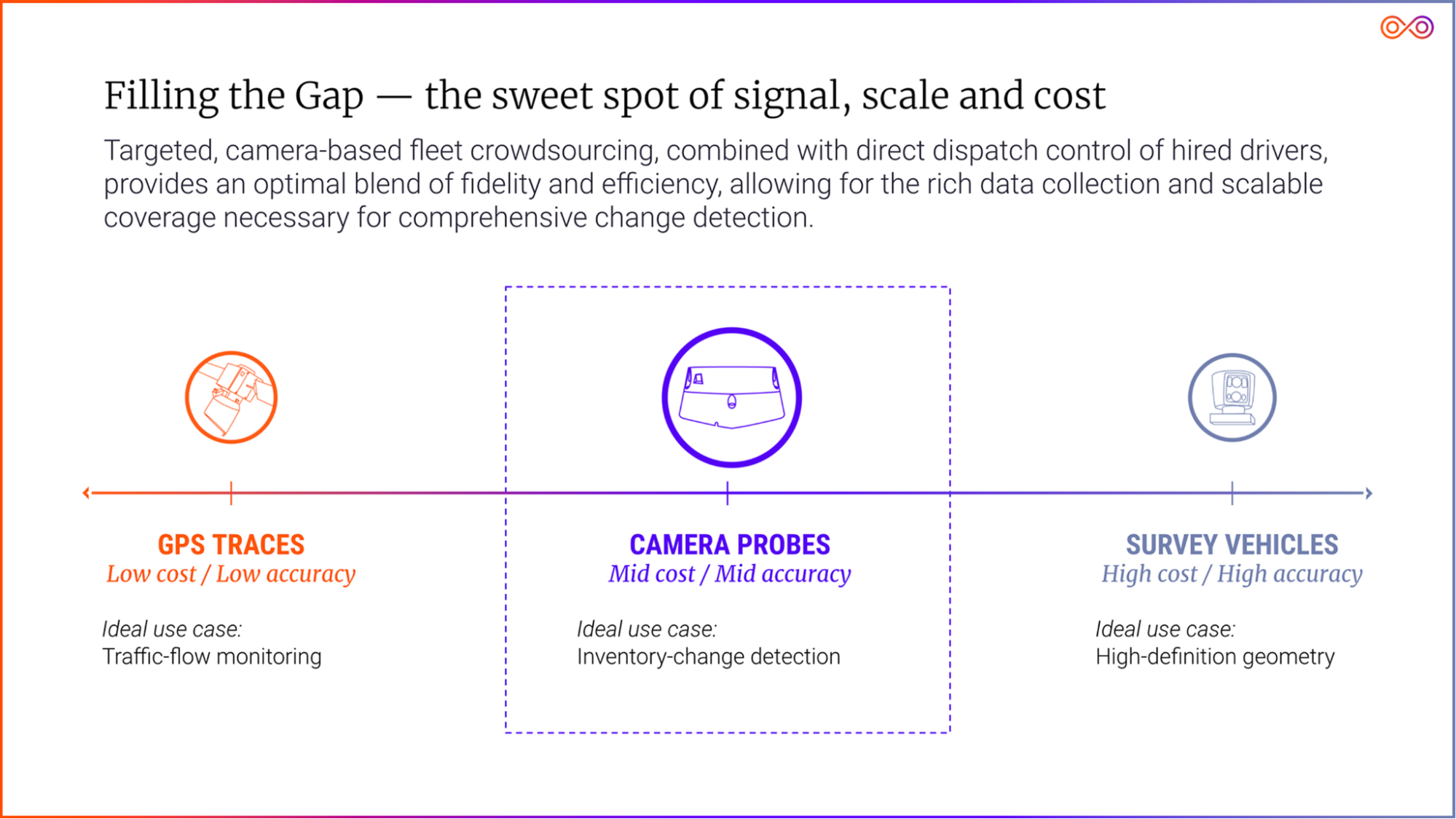

As I sort of alluded to before, costs really become an issue when you’re talking about maintaining maps. The prevailing current solution, namely, rescanning roads with LiDAR survey rigs (selectively or en masse) is untenable by roughly a factor of 100x cost per mile. That’s really where we’re seeing the innovation. There are a lot of approaches: aggregated consumer vehicle data, which I already mentioned; aerial/satellite-assisted mapping; and so on. We think incorporating fleet video–based updates as well represent the ideal, holistic balance of fidelity, speed and cost at scale.

Q7: Most of the conversations around maps for autonomous vehicles have been focused on their use for localization. Is this too narrow of a view with respect to what role maps play in the future of autonomous driving? What are some other cases you could envision for the use of maps in the future?

Certainly today, HD maps also have a perception function, acting as an additional “sensor.” This sensor helps the autonomous vehicle verify what it’s seeing or aids in navigation through areas where signage or road markings may be obstructed. As a matter of fact, the first live application of a CARMERA map was in an autonomous vehicle that was attempting to navigate the wintery streets of Michigan, where the snow had obstructed lane markings.

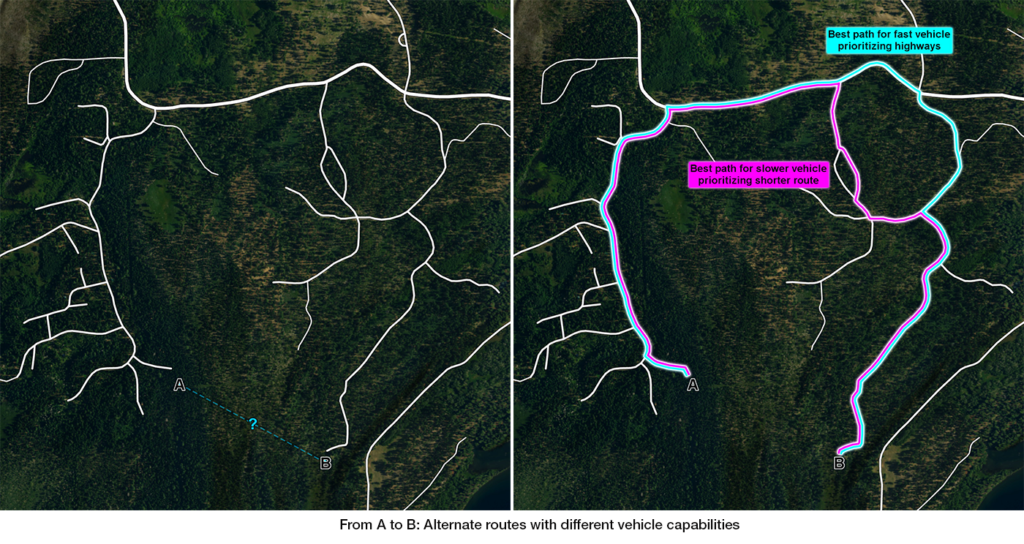

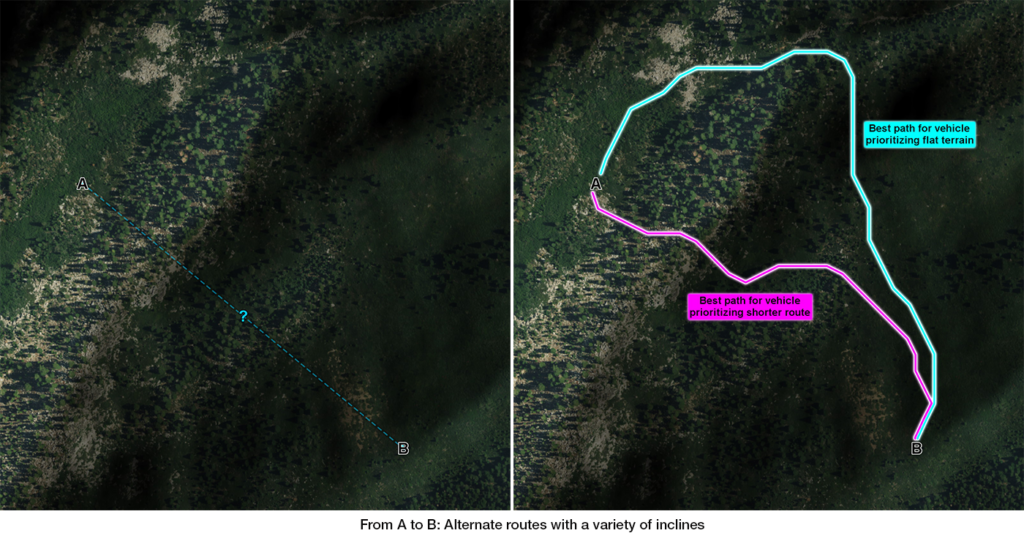

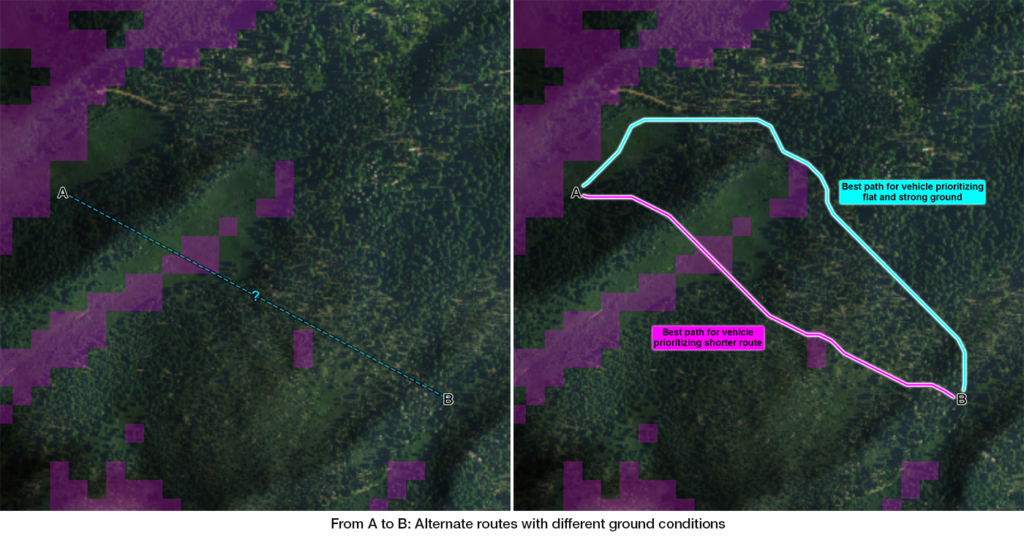

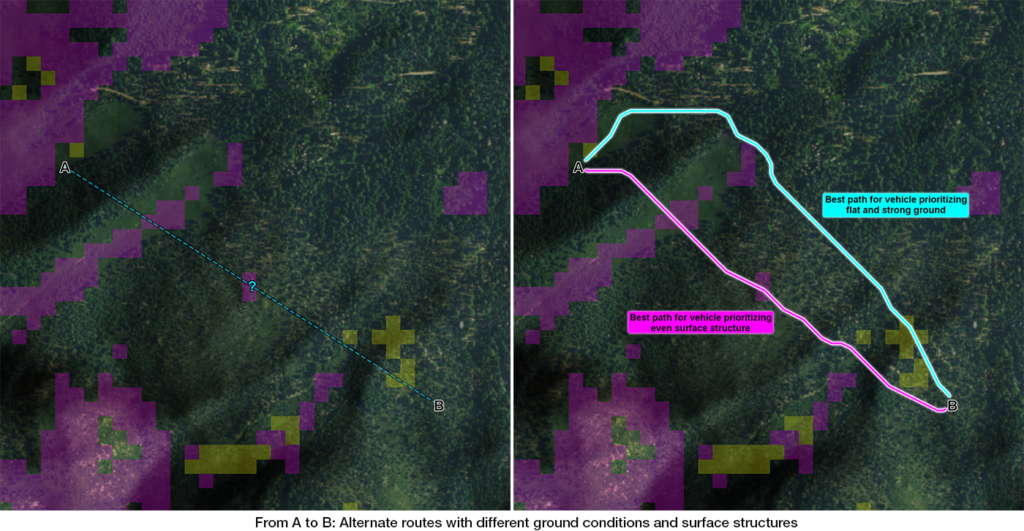

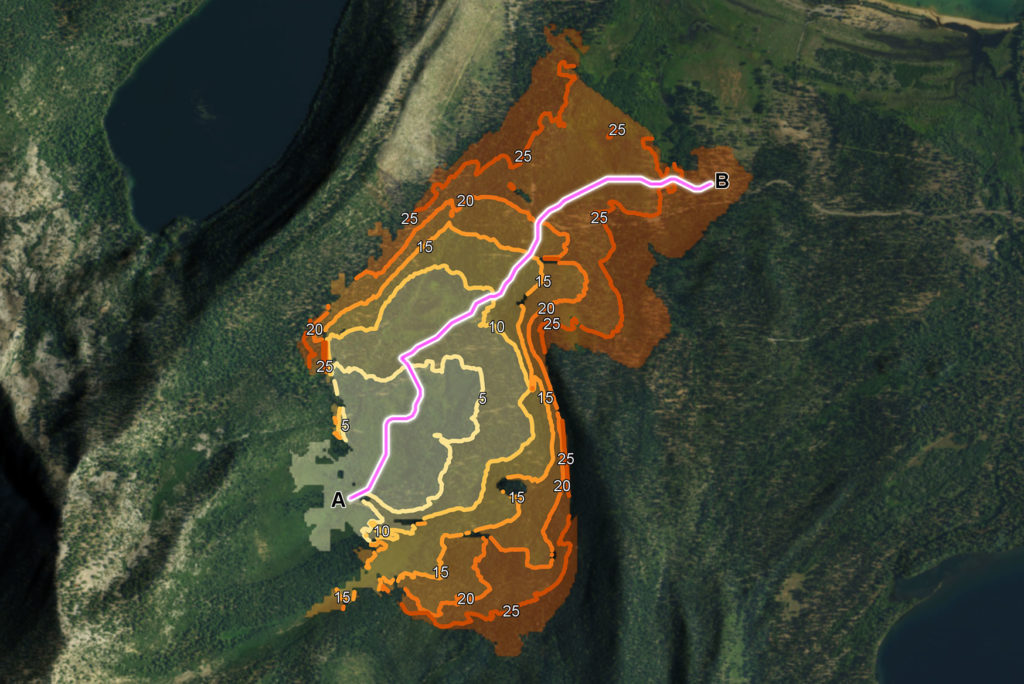

In the future, maps will play a huge role in optimal path planning. As the core AV technology becomes commoditized, the quality of the ride will be of greater and greater importance. Customers will want their autonomous vehicle to be able to choose the fastest / safest / most scenic / quietest / etc. path. It is important to note that any path planning that occurs beyond a vehicle’s sight horizon will be hugely dependent on maps.

All of this is only referring to the drive experience. There are any number of applications as we think about the future in-cabin experience, but I think we’re still a ways away on that front.

Q8: In a recent blog post, you spoke about the convergence of standard definition (SD) and High-Definition (HD) maps into what you call MD maps. In the near future, do you see MD maps opening up new models for monetization of map data beyond the regular navigation and logistics applications?

I think we’re just scratching the surface in terms of the monetization of map data—whether MD, SD, or HD. Especially as automated vehicles really hit their stride, you can see the potential for a host of monetization opportunities related to powering navigation decisions. Those can be anything from promoting and automating EV charging stops along your trip, to incorporating carbon savings for a given route.

Q9: CARMERA operates under a “Data-As-A-Service” business model which makes it seem like you are a direct competitor to the mapping giants. Do you also see it that way?

No, we actually have friendly relationships with most of the major mapping players and have been working with several of them. We’ve taken a modular approach to our technology for those platforms. We also do full-stack HD mapping, but generally only for Level 4/ mobility-as-a-service use cases, like robotaxis and delivery bots. There, our competition tends to be build vs. buy.

Q10: In your opinion, what is the biggest challenge with HD mapping today?

The biggest challenge is scaling them at acceptable fidelity and cost, and maintaining them at acceptable speed, fidelity and cost.

Q11: What is the one thing that you wish more people understood about HD mapping in general?

I wish people understood how the complexity of HD mapping is a beautiful reflection of the incredible power of the human brain. Ultimately, that’s what HD maps are really trying to do—replicate a human’s full understanding of the road. Human drivers are constantly processing information on several dimensions:

- Knowledge: What do I know to be true here?

- Wisdom: What has past experience taught me?

- Foresight: What should I anticipate coming up?

The various layers of the HD map—the geometric, the semantic layer, the event layer, etc.—are really meant to replicate that nuanced analysis the human brain is constantly engaged in. When you see a visualized HD map and see the endless web of lines and arcs, people should be awed not just by the detail of the map, but by the incredible feats of computation your brain is doing on even the simplest of drives.

Q12: Okay, this is a tricky question: On a scale of 1 to 10, how geo-awesome do you feel today?

Q13: What’s the best way to contact you and your team in case someone is interested in getting in touch?

Visit CARMERA.com or hit us up at hello@carmera.com, LinkedIn or Twitter.

Q14: Do you have any closing remarks for people looking to start their own company?

Embrace risk—the good kind.