HERE’s Automated Driving Project: #HEREInterview

Automated Driving and Driver-less cars are definitely one of the most exciting tech projects in development and they have constantly been in news for a while now.

Automated Driving and Driver-less cars are definitely one of the most exciting tech projects in development and they have constantly been in news for a while now.

We at Geoawesomeness had the pleasure of talking to Mr. Dietmar Rabel, Head, Product Management for Automated Driving and Mr. Christopher Lawton, Communications Manager about here’s vision for the automated driving project. Read on.

Tell us about Here’s Automated Driving Project

Dietmar Rabel (DR): We started, 16 years ago to be precise with with map enabled aided systems. The first time vehicles were using maps, it was to enhance vehicle functions like helping the front lights steer in the right direction and so on. This was the very beginning of using maps for aided systems, 16 years ago.

Our vision at the Automated Driving group is to help the automotive industry with our Automated Driving Cloud capabilities. It is basically a cloud backend with map data, real time information about the current status of the road to cars, etc so that autonomous cars can make intelligent and informed decisions.

Few years ago, we started partnering with couple of OEM systems to investigate possibilities in the automated driving area. For the first public event, we partnered with Daimler for the Frankfurt motor show where basically a Mercedes test vehicle drove autonomously using our map data for the Bertha Benz memorial drive.

How does HERE go about making HD maps?

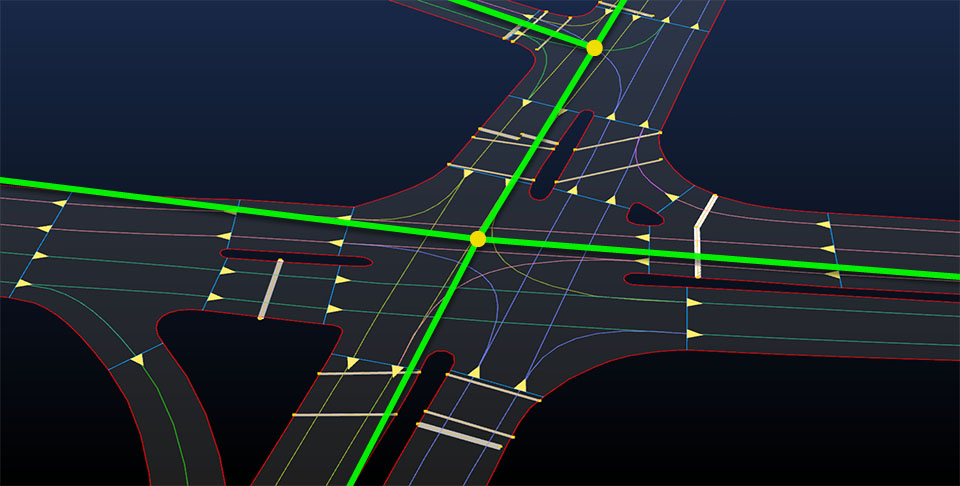

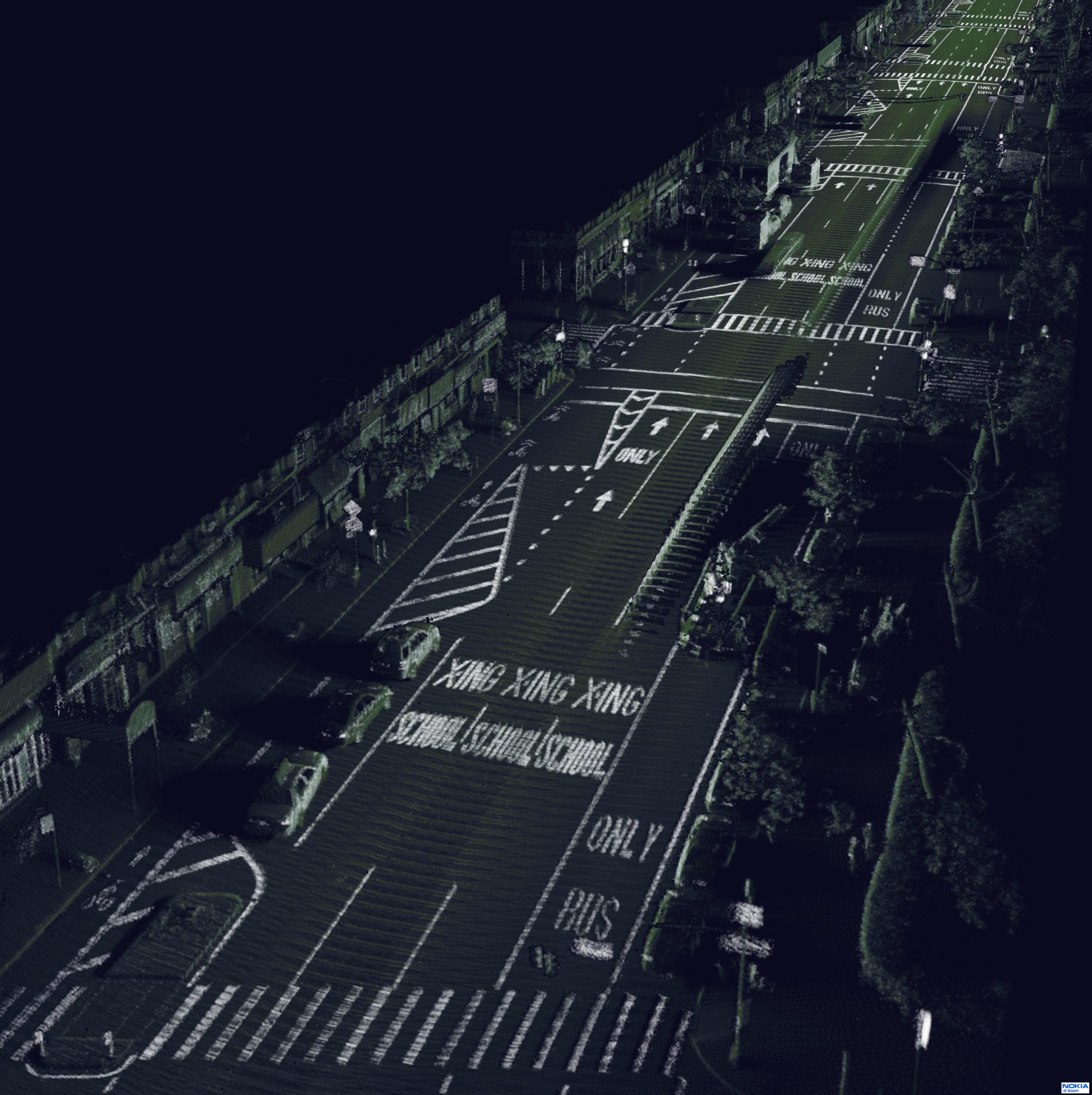

DR: Before we discuss the process of producing HD maps, I would like to illustrate the importance of maintaining the HD maps. For current navigation system, it is not an issue if your map data has an inaccuracy of a few meters but this is not something we can have in HD maps that autonomous cars will use. Traditionally mapping data was collected and then updated via governments, user feedback or simply by driving again in that area and so on. When we started collecting HD maps with 20cm accuracy, we used HEREs’ Lidar technology, Cameras, location sensors like GPS, etc to collect precise data and model the roads precisely.

But by only collecting the data i.e. HD maps, we cannot maintain the data. For maintenance we will have to tap into crowd sourcing. We are expecting quite a lot of vehicles are driving either autonomously or semi-autonomously will have such sensors that will detect changes in the real world that deviate from our precise HD maps and models. The vehicles will then update this information via the cloud. With crowd sourcing technology, we can maintain the HD maps and also inform other users about changes via the cloud. This is the approach at the high level.

Does every autonomous cars need to have such high-end sensors for crowd sourcing?

DR: The individual vehicles don’t need to have the same level of accuracy as our mapping cars which houses really expensive Lidar sensors. The sensors in these individual vehicles simply need to detect whether something is not right anymore i.e. check the environment model that they are building and check if there is a mismatch with our model which helps us investigate these differences, maybe even send one of our HD mapping cars to resurvey. Over time of course, sensors will get more accurate and then we will get better information and then we can do better crowd sourcing, but no, not every car requires such precise sensors.

Is crowdsourcing the process of HD mapping an option?

DR: It does time to build the database for maps, it is entirely doable for us to produce HD maps for motorways using the sensors the OEMs are rolling out. One of our strengths is a global specification, the same algorithms work on our data regardless of the geographic location of the data or how it was collected. The level of accuracy required from day one is just too difficult for a crowdsourcing methodology of data collection as the number of vehicles that such sensors is really low and for crowd sourcing of HD maps, we need a lot of such vehicles. That being said, crowdsourcing will play an important role in the maintenance of the data.

How do you mitigate bad weather when it comes to autonomous driving?

DR: The challenge in localization the vehicle is that GPS can be used to get a rough overall position and then we need the lane marking sensors to try and figure out the lanes and this is going to be difficult under bad weather conditions.

In addition to lane markings, we also use localization objects like corners of a road, etc on both along the road and also across as well. This helps the lidar and radar measure their distance from such markers to accurate pinpoint the location of the car on the road. In bad weather conditions, some of the sensors will have issues, that’s why we would redundant sensors to overcome such issues; we need enough of these localization markers to ensure obstructions and bad weather conditions dont affect autonomous driving.

The technology is currently being rolled out in controlled access roads. Its a much simpler environment than cities but when we expand and enter cities and face bad weather. This is going to be an interesting challenge.

Tell us about the live roads project

DR: Basically our automated driving product strategy has three pillars – one of them is road network data, this data is mostly static but if it changes then it is important that we need to update it immediately.

Now live roads is on top of that. It is basically it is a real time view of the roads, so basically all the variable map information – slippery roads, accidents, pedestrians, visibility conditions are aggregated in the cloud and cross checked with other sources. So when a car informs the cloud that its wheels are slipping in a particular location, we quickly have to confirm whether other cars are also experiencing similar issues at this location, Maybe the slipping is caused due to snow, rain which means we need to correlate the slippage information with weather sensors. If that’s the case, we then know the car doesn’t have issues with its tires. Otherwise its important to have this information. That’s live roads.

What is the biggest challenge in mapping HD maps?

DR: The biggest challenge is not the mapping at high accuracies, its the maintenance. The data we collect is really accurate but we are very very careful with what we use as we need to maintain the data at a high accuracy and they have to be updated at a constant rate.

HERE has transformed itself from a maps company to a spatial computing and visualization power house. What’s next?

Christopher Lawton (CL): A number of trends are converging to make location the key driver of innovation. Today’s leading businesses require seamless access to location, as it plays a vital role in a variety of their services and applications. Advances in connectivity, the internet of things, social networking and mobile devices are driving demand for cloud based location services. At the same time, the proliferation of affordable sensors is opening up new possibilities and new business models around location. We are entering a world where everything can be mapped and represented in multiple dimensions. Not only can places can be mapped with digital 3D abstractions, but the movement of people and objects can also be charted, providing a rich source of fresh data that brings the map to life.

As the only pure play location company with global reach in the market today, HERE is in a prime position to take advantage of these trends in location. HERE is building on our foundation as the leading map content company to become the leading location cloud company. Our ambition is to reinvent the map, making it the prime source of location intelligence for businesses across industries, capable of providing fresh, predictive and highly contextual answers for every question.

For example, our Enterprise business at HERE helps companies and organizations understand and analyze their operations and assets using our location data and tools. With an advanced understanding of both their mobile and fixed assets, companies can gain new insights into their businesses that help them boost productivity and efficiency. The result is a better top-line and bottom-line for our customers.