How utilities can use drone, helicopter, and satellite data as part of an integrated vegetation management solution

Editor’s note: This article was written as part of EO Hub – a journalistic collaboration between UP42 and Geoawesomeness. Created for policymakers, decision makers, geospatial experts and enthusiasts alike, EO Hub is a key resource for anyone trying to understand how Earth observation is transforming our world. Read more about EO Hub here.

Early in the morning of November 8, 2018, the alarm woke firefighters in Butte County, CA. Ignited by a faulty electricity line, a fire had originated in the hills above several communities, with an east wind driving the flames downhill through these developed areas. It killed 86 people, destroyed nearly 14,000 buildings, and generated $16.5 billion in losses. After nearly two years of investigation, there was no doubt as to the cause: Pacific Gas & Electric, California biggest utility, was guilty.

In January 2019, the company had already sought bankruptcy protection after accumulating an estimated $30 billion in liability for fires started by its poorly maintained equipment. Investigations revealed that PG&E had caused a total of 1500 wildfires over the course of six years before 2018 deadly ‘Camp Fire’ (as the Butte County blaze was known), and that many of these fires could have been prevented by effective vegetation management processes.

Fast forward to 2020, and PG&E was spending $3.7 billion on vegetation management alone. In fact, vegetation management is the largest single operations and maintenance cost for most of the utility companies in North America and Europe. The expense is almost entirely spent on contractors who trim, prune and cut back the vegetation.

Utilities: traditionally late adopters

Most utility companies trim around their circuits on a fixed cycle (typically 3 to 5 years), where third-party companies clear all the vegetation within a certain proximity to the infrastructure. This decades-old method requires a lot of boots on the ground—including manual data gathering, in which dozens of people walk below the infrastructure to inspect the vegetation to be cleared, as well as manually verifying that the work has been executed by contractors, and how well it was done.

This is, at least, how things have been done for the past several decades. It has worked well, to an extent, but in the era of widespread digitalization, it seems very outdated today. One of the issues is that utilities typically do not have much incentive to innovate, nor have they been particularly encouraged to do so by regulators. The firms are often also cautious by nature, precisely because of the critical nature of their operations.

However, an industry traditionally slow to innovate is now being challenged to move faster in adopting new digital technologies. Companies across the sector are facing increasing pressure to meet new requirements and respond to the evolving demands of customers and society for greater environmental protection. Digitalization also helps to reduce operating costs and significantly improves the reliability of the service. The trend is clear: utilities will be forced to digitalize and innovate.

Towards integrated vegetation management

One of the spaces in which utilities are trying to innovate is in vegetation management. However, in this sector, being an early adopter often generates cost and risk, and for these naturally cautious companies, the technology adoption cycle can still be measured in years rather than months. Utilities prefer to be ‘second adopters’, waiting for other competitors to validate a new technology or approach, and then learning from their mistakes. This can still mean years of delay.

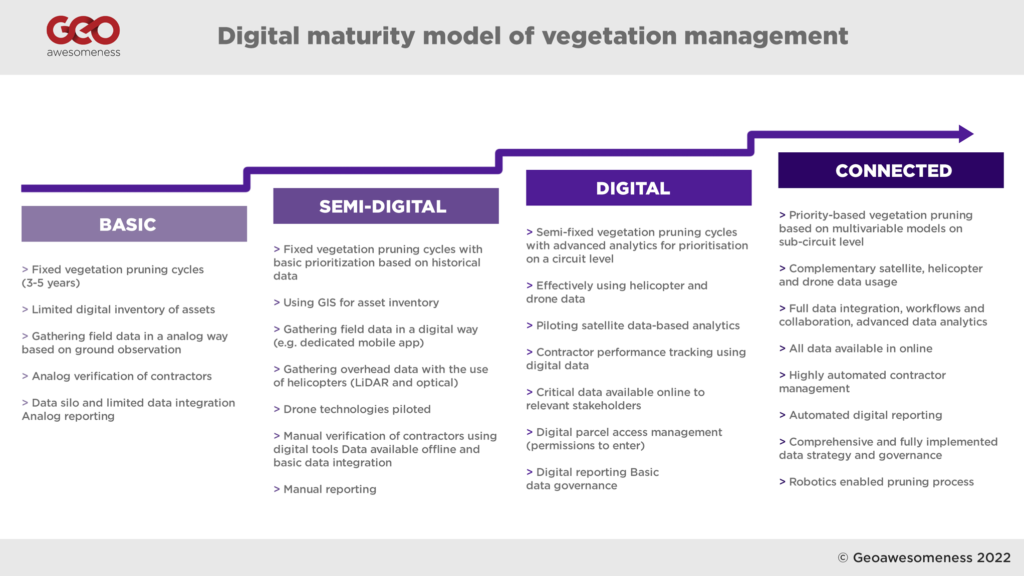

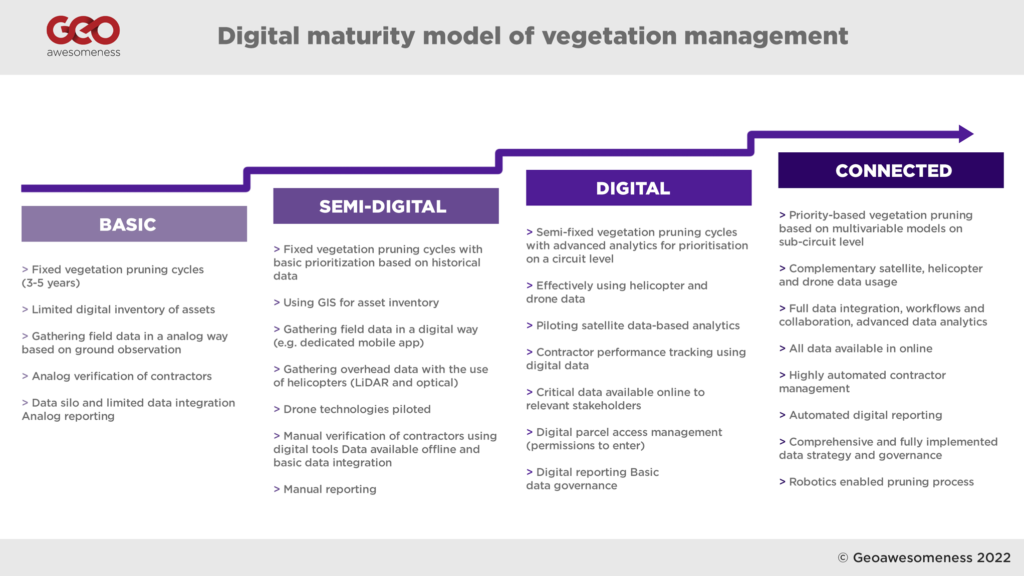

In analyzing the digitalization levels of the major TSO (Transmission System Operators) and DSO (Distribution System Operators) across Europe and North America, we have developed a ‘digital maturity model’ of vegetation management processes. Our research revealed that the majority of utilities are between ‘Basic’ and ‘Semi-digital’ levels of maturity, with only a few more advanced organizations (such as National Grid, San Diego Gas & Electric, and E.ON) pushing their digital agenda forward and introducing more advanced integrated vegetation management programs.

Digitalization of analogue processes

For many utilities, vegetation management and asset maintenance processes are still paper-based, consisting of binders full of documents, and one of the first steps to take is the digitalization of these processes. Hydro One, a major Canadian utility company—and the only utility in North America with a dedicated, in-house vegetation management team—recently performed a large-scale digitalization project titled ‘Move 2 Mobile’. For many years, the field staff used a paper-based process, sending and receiving work orders and supporting documents in physical, paper format, which were then completed in the field and sent back to a central administrative location for processing.

The Move 2 Mobile digitalization project integrated all phases of work, connecting a variety of departments and business units to streamline information flow and improve accuracy. From work identification and planning to designing, scheduling, and dispatching, to final work completion, each aspect of the business was granted improved visibility of on-field activities and related customer service.

The new solution was deployed on digital tablets, which were rolled out to over 2,000 field workers across Ontario. Allowing field workers to receive and complete their work digitally also meant tools such as GPS-enabled maps, electronic work packages and schedule optimization could be used, enabling staff to complete tasks with more accuracy and improved productivity.

The project brought more than $16 million in savings realized through improved fieldwork and administrative efficiencies.

Identifying vegetation for pruning using Geospatial modelling

Digitalized data and processes enable companies to apply more advanced analytics to their vegetation pruning cycles and scheduling. Some companies add more advanced analytics on top of this, for example using outage data from previous years to determine prioritization.

Using advanced analytics and tools, leading utilities have developed models to create predictive failure and trimming cost curves, to optimize the trim cycle at the individual circuit level.

Companies like San Diego Gas and Electric (SDG&E) are relying significantly on their GIS databases to map all the trees along their power lines and keep geospatial information up to date. (SDG&E is one of the most advanced utilities when it comes to GIS usage, and about 2,000 employees have access to Esri ArcGIS platform.)

SDG&E’s database contains records for over 460,000 known, specific trees located near its electric power lines, each of which is assigned a unique ID number, allowing changes and activity to be tracked. Every tree in SDG&E’s database is monitored on an annual basis by field workers, using known species growth rates (with additional consideration given to the amount of rainfall occurring during defined periods affecting tree growth) as well as past pruning practices.

SDG&E also models the risk of wildfire by utilizing historical and contextual data, and uses this forecast to determine where vegetation management operations should be focused or prioritized. GIS and database analyses, using metrics including vegetation type, weather, topography, and outage history, can help predict where tree failures are most likely to occur.

Drone and helicopters for vegetation management

SDG&E was one of the earliest adopters of drone and helicopter technology. The company launched their drone program back in 2014 with a focus on oblique images captured for their asset maintenance program, as well as LiDAR laser scanning as an input for networking planning and simulations in PL-CADD environment. However, since 2017/18 they have started to expand their drone program to validate vegetation clearances.

The use of helicopters for transmission line inspections has been around for a while. Initially, they were used by maintenance workers and ‘linemen’, flying close to the infrastructure and inspecting the lines with binoculars. Gradually, helicopters started to be equipped with cameras and LiDAR sensors to gather data which would be analyzed later in back office.

Where helicopters are not appropriate (for example for distribution power lines in populated areas), utilities started to experiment with drone technology, which enables the acquisition of even higher resolution data with more targeted inspection images. The challenge for the industry across the globe, however, is that regulations don’t currently permit large-scale ‘Beyond Visual Line of Sight’ operations, which limits the number of circuits that can be mapped to 6-18mi (10-30km) per day, per drone (depending on the terrain).

Despite this limitation, drones have proved to be a great tool to enhance asset management and maintenance processes, with a number of start-ups (such as Hepta, Scopito, Precision Hawk and others) delivering great software tools for power line inspections. However, in terms of LiDAR scanning and vegetation management, the role they play remains complementary to helicopters, rather than comprehensive.

LiDAR has a distinct advantage over photogrammetry solutions based on optical data: laser beams from a LiDAR sensor can penetrate through vegetation. This means that within the data you can map not only the vegetation but also the terrain below it—critical for development of Digital Terrain Models and Digital Surface Models used in the vegetation analysis. Once the 3D point cloud data is captured, the typical scenario includes 3D point cloud classification based on TerraSolid software (used by 90% of the industry) and proximity analysis of points classified as vegetation and conductors.

Although some providers do utilize photogrammetry data for vegetation analysis, a typical scenario includes LiDAR data—which is also faster to process and capture. The overall solution is very accurate and effective, but the challenge is both the cost and the size of the data. With an average price between US$200-400 per kilometre of data to be captured by helicopter and processed, utilities must also add a significant cost to store and maintain the data captured—one kilometre of captured data can easily translate to 50GB of overall data size.

Satellite data seems to be the future

The solution for these challenges seems to be satellite data. Previously considered to be too inaccessible and inaccurate, Earth Observation data is turning out to be the future of vegetation management. More satellites have been launched into space in the past two years than in the entire history of the space age prior to that point. High-resolution images (30cm) and radar SAR data are now available from companies such as Airbus with the new Pleaides Neo constellation and Iceye, which revisit any place on the planet several times a week—or even in a single day.

Satellite data generates very useful optical images of the vegetation, which, combined with Deep Learning models, can map vegetation around utility companies’ infrastructure. The data also enables creation of Digital Elevation Models based on stereo images and SAR data, with resolutions to the level of 50cm. Satellite data is far less accurate than drone or helicopter data, but it gives utilities the full picture of the entire network, instantly, which is a real game-changer.

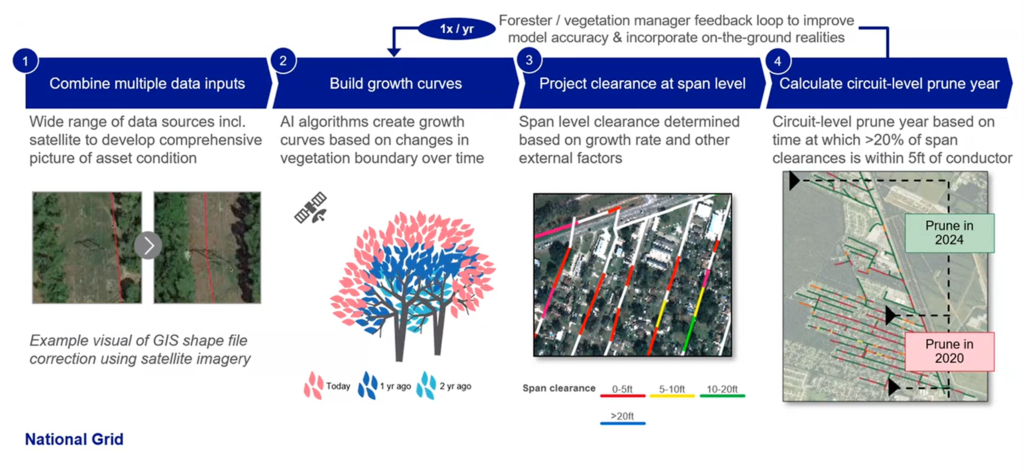

Successful implementations from two main providers, AI Dash and LiveEO, suggest that the lower resolution might not, in fact, be much of an issue. Interestingly, at the end of 2020 AI Dash received $6m in ‘series A’ funding from an investment fund run by National Grid—one of the biggest utility companies in the U.S. and the U.K. The investment was followed by an extensive pilot project implemented by National Grid through 2020 and 2021 as part of the Vegetation Management Optimization initiative.

As part of the pilot project, four circuits were preselected. For the selected circuits, National Grid and AI Dash took available GIS data layers and combined them with historical satellite data. AI Dash models combined satellite-based Digital Elevation Models and optical data to detect the vegetation, and based on the satellite data from different points in time AI Dash made a ‘vegetation change detection analysis’, generating prediction models for vegetation growth. The models were then intersected with GIS data to determine where the span level clearance creates a risk. Finally, based on the data the model predicts when a particular sub-circuit section should be cleared.

The pilot project included ground level verification of the results in the field, and this confirmed the model had demonstrated 83-100% accuracy of predictions, depending on the circuit. Overall, the pilot has been very positively assessed by the National Grid operational team, and the project is being further implemented within the organization. The use of satellites for time prioritization is expected to bring up to 12% in operational savings. National Grid also sees opportunities to use such data for other use cases, such as asset planning and storm restoration amongst others.

Enabling satellite data for large scale processing

Interestingly AI Dash and LiveEO are both supported d by UP42, a developer platform providing APIs for accessing satellite data and infrastructure to process and analyze it at scale. The role of such platforms is critical for the development of satellite data powered startups as they are bridging the key barriers for adopting such solutions. Traditionally accessing satellite data has been highly challenging as satellite companies have no incentive to provide access to data to clients other than the military and governments. On the top, building satellite data processing workflows from scratch has been a barrier difficult to overcome. Platforms such as UP42 are streamlining the entire process and enabling companies to focus on building analytical solutions rather than infrastructure, which is the critical enabler for Earth Observation data-powered solutions.

Satellite vs Helicopters vs Drones

The case studies suggest that satellite imagery has several advantages over traditional methods, as well as drones and helicopters—especially when it comes to instant availability and coverage for the entire network, as well as cost.

Additionally, the satellite data is accessed via APIs from satellite data providers, which means that the data governance process is much more streamlined compared to the large-scale data gathering and processing required with drone and helicopter data.

On the other hand, drone and helicopter data can be of a very high resolution, providing results with nearly 100% accuracy.

It seems that the winning system will inevitably combine inputs from all of these methods. Satellite-based models combined with other historical data will identify risk areas, whereas drones or helicopters can be deployed for more detailed surveys—and yes, even field teams will continue to be used for thorough ground-level investigations.

Above all, the analysis of different case studies suggests that no two utilities are the same. Some vegetation management programs rely on pen, paper, and excel sheets; other utilities work with highly complex AI-based risk models. And of course, the end goal is not to determine with technology is better, but simply to provide solutions to the challenges.