What Google unleashed in Google I/O 2017 that every Geogeek should know

Google is now almost omnipresent. It is lot more than a search engine and has always updated itself, to serve what is required by the masses. From a period, where the needs shaped the Google’s innovation we have reached a time where the google’s innovation shapes the trend. Location data, navigation data and indoor positioning have become an integral part of any application built by Google. Not to forget that Google earns from ads, and soon from location-based ads. AR and VR has a lot more to do in terms of positioning either from the surroundings or in a virtual environment. Some insights on Google I/O 2017 will give a better outline on where Google is moving and where does it take the Geospatial industry.

Google I/O 2017

The Google I/O 2017 never ceased to wow the crowd and the developer community with its new initiatives, updates and viral announcements. Pichai, welcomed by the initial ‘I love you’ shouts presented the keynote address. The very beginning of his speech had a success tone, for achieving more than 2 billion android users and still counting. Pichai also stressed upon the ‘AI First’ approach where google is moving towards and added more about the google lens initiative, Cloud TPUs, google assistant updates and the future of google AR and VR. After all such announcements on what google is doing and where is it moving, Pichai was literally seen as the Tech Rock Star. Nevertheless, Google I/O 2017 had many new announcements by the VPs apart from the keynote address. Here’s an outline on the significant announcements & an abbreviated video coupled with the transformation it could bring on the geospatial industry:

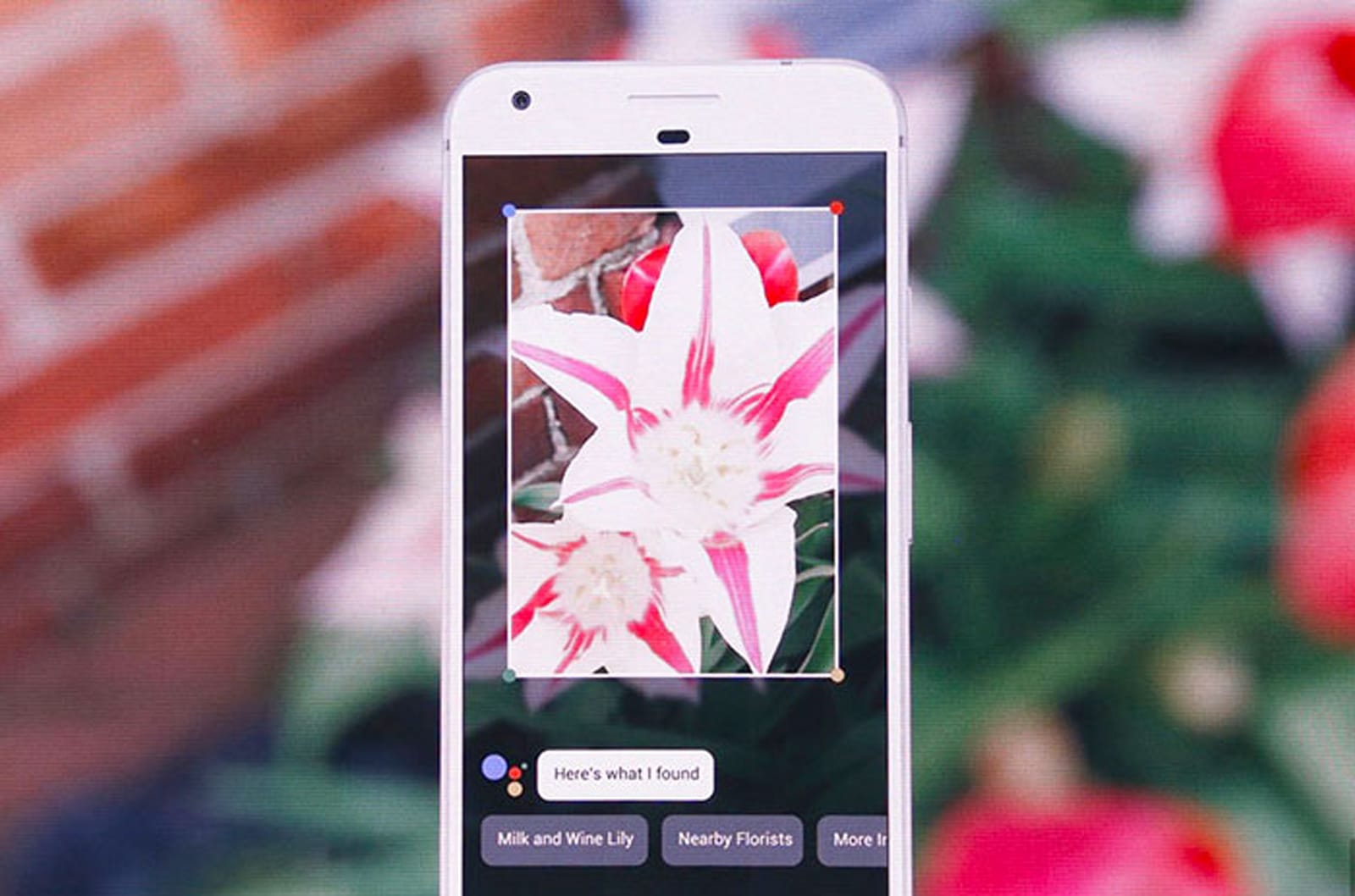

1. With Google Lens you can see more

Courtesy : CNET

Moving ahead from text and voice searches, google lens will now enable searching through camera’s vision. Google lens is capable of identifying any objects and it can name it with the help of google assistant. The other example is even more interesting, by focusing the camera on a place (restaurant, museum, hotel etc.,) google lens identifies and automatically brings you the ratings and other information from google. Google lens also lets user to connect automatically to a Wi-Fi network just by scanning the network name and password at the back of the router. For a geogeek this is an exciting development, as it would pave path for drastically reducing the time-lapse in automatic traffic sign recognition on self-driving cars. Optimistically, lens may dominate the other forms of data in near future.

2.Cloud TPUs for the future AI

Courtesy : Google Inc

Google is venturing in to cloud business and its excellence in AI is an additional boost. While the previous TPUs by Google represents next generation chip that is custom-built for AI handling, the new hardware that works on the TensorFlow platform will now be available for developers on Google’s cloud service. The new chips nearly double the speed of Google’s previous version build in 2015, able to perform 180 teraflops (FLOPS, floating operations per second) against the previous generation’s 92 teraflops. Combining the TPUs and TensorFlow, Google is effectively transforming from cloud computing platform to Android for AI. The introduction of Cloud TPU will let google have the forefoot in every AI advancements.

Google AI is capable of identifying and removing the noise(the unwanted features) as shown above. This would have received a very good welcome note amongst the developers working on tools to remove noise from images obtained by any remote sensing technique. Many tools have been developed so far, to subtract noise with a considerable degree of human intervention. The Cloud TPU AI hardware can automate this and do the hard work for you.

3. Google Assistant with more sense of understanding

With google Assistant fused with google lens, you can add more meaning to what you see. If you point at a dish through your camera, google assistant can let you order a dish and even make payments through it, as simple as you interact with a real person. The update also enables you to type, as we hesitate to voice command it in public. Assistant has now entered in to the iOS world as well and we will witness the rollout of Google Assistant in regional languages later this year. Google confirmed that it is launching Assistant developer kit which can be integrated with any third-party devices, so brace yourself to command the Assistant across devices.

With Google assistant integrated with In-car entertainment(infotainment) system, you can ask for ‘List the Chinese restaurants en route home’ when you start from your office to home. The intelligent google assistant will know the location of your office, home and the usual route you trace from which it will be able to fetch out appropriate responses, exactly how a GIS technician wants it to be.

4. Google Home gets more proactive

Courtesy: Slash Gear

Google’s smart speaker, the Google Home can now do a lot more than just responding. Yes, Google home gets proactive to intimate you on traffic delays, event reminder etc., Google Home also allows free call service across USA and Canada and it comes with personalized contacts for multiple users achieved by voice recognition. For instance, you say call my mom, google home can connect to your mom and when your spouse says call my mom, it connects to his/her mom in lieu.

You can also ask Google home for a route map which will be displayed on your phone through google maps or you can just ask for your calendar to be displayed on any streaming device that is connected to Chromecast.

Now, what if Google Home gets more homier and notifies you on what is happening in and around your location? I think this not far from real. Imagine Home notifying you on an event in your surrounding or an offer at your nearby stores and restaurants. All apart how about Home notifying you when a persons enters your home with the help of geofencing technology, Google Home can form an effective security system as well.

5. Google Photos saves us a lot of work

The three compelling updates of Google photos facilitated by AI are Suggested Sharing, Shared Libraries and Photo Books.

The Suggested sharing will automatically segregate the photos of a specific person through AI and lets you share it with them. This comes as a handy remedy when you click photos generously on a party and finding hard to send specific photo to specific person. Google Photos can just organize this in a matter of seconds and all you have to do is to click on share.

Shared Libraries will automatically (by AI) share photos with a prescribed group. Of course, it’s customizable too viz., you can filter to share only your children’s photo in your family group.

With Photo Books Google tries to bring back the olden joy of having a hard-copy photo. This service is available for US as of now where users will be able to ask its AI to trawl through all the photos it has of a person and automatically generate a picture book using them.

For every geo enthusiast out there, here is a cool functionality that allows you to identify a place or a building or a river that you clicked a picture of and later you forgot what it is. Don’t worry, open the picture with Google lens to find out what place it is, the name of the building or the river or the place. This had been made possible with the help of geotagged pictures that knows where you exactly clicked the picture and helps Google lens deduce further.

6. Android O Beta

Courtesy: Techcrunch

Google rolled out the latest iteration of Android O. The Android team blew through a range of new updates, including new emoji, picture-in-picture video, security enhancements, and more AI capabilities built into the device. The new operating system will also be able to auto-fill logins and passwords you’ve stored with Google on other devices. For example, if you’ve logged into Twitter on Google Chrome on a laptop, when you install the Twitter app on an Android phone, the phone will suggest you log in with the Twitter account you’ve already used. Android O will also likely increase Android devices’ battery life, through efficiency enhancements to the way the operating system computes. With more specs coming on Google Product launch this autumn. The new updates doesn’t have anything that lets a geographic person to go awe, except for the fluid experiences(picture-in-picture mode) which lets us watch a video while navigating and relives us from a boredom on a long drive or even you can view the maps minimally to watch an enlarged video, left to your prefernce.

7. Android GO

Google will release Android Go, a lighter version of its mobile operating system for devices with less than 1 GB of memory. Google tends to tap on the developing nations with this lighter version helping users without a strong network connectivity. It will include YouTube Go, a version of the streaming app that lets users save videos to watch when they’re offline, to conserve data usage, and share videos from one device to another, without a data connection. Google stated that the launch is expected in 2018. But what might bother a geogeek is that every app moves to a lighter version in Android GO and Google Maps may not be an exception, which will not be a welcoming one as it will restrict the effectiveness of the app as such.

8. Talking about VR and AR

Google announced that the Daydream standalone headsets from partners including HTC and Lenovo requires no smartphone or PC. Google also graced upon project Tango an augmented reality that can understand your surroundings and provide information as you walk through an Indoor Location. Google calls it as Virtual Positioning Service which helps you orient indoor just like GPS on the outdoors. Many indoors including malls, museums are adopting this technology to give customers a better experience.

Ultimately, all the announcements in one way or the other are location, navigation or indoor position dependent, which will definitely contribute to the generation of location-based data. The perfect tapping on which can unleash human trends and patterns which can either be commercialised or used to help the human community as a whole.